Abstract

Federated AIoT uses distributed data on IoT devices to train AI models. However, in practical AIoT systems, heterogeneous devices cause data heterogeneity and varying amounts of device staleness, which can reduce model performance or increase federated training time. When addressing the impact of device delays, existing FL frameworks improperly consider it as independent from data heterogeneity. In this paper, we explore a scenario where device delays and data heterogeneity are closely correlated, and propose FedDC, a new technique to mitigate the impact of device delays in such cases. Our basic idea is to use gradient inversion to learn knowledge about device’s local data distribution and use such knowledge to compensate the impact of device delays on devices’ model updates. Experiment results on heterogeneous IoT devices show that FedDC can improve the FL performance by 34% with high amounts of device delays, without impairing the devices’ local data privacy.

FL in AIoT: Challenges

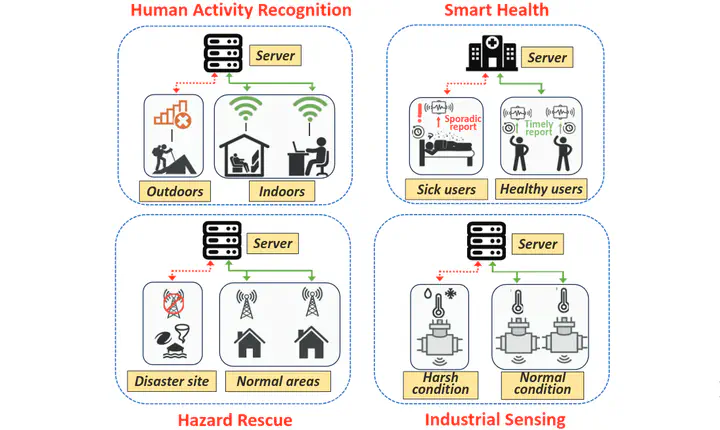

Federated Learning (FL) in Artificial Intelligence of Things (AIoT) is often used to train AI models in scenarios where data in AIoT applications is generated at distributed sources and cannot be processed at a central server due to large sizes and privacy concerns, such as Human Activity Recognition, Smart Health, Hazard Rescue of Industrial Sensing.

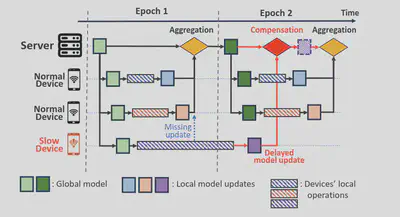

For FL in AIoT environments, there are two major challenges, namely data heterogeneity and device delays. Non-IID data distribution degrades the global model’s performance, and delays could cause significant slowdown of FL training.

Conventional FL solutions fail in such cases because they either:

- Ignore delayed updates (synchronous FL)

- Down-weight them indiscriminately (asynchronous FL)

- Assume independence between delay and data (which is rarely true)

Our Solution: FedDC

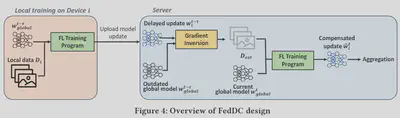

FedDC introduces a gradient inversion-based Delay Compensator that estimates and rectifies the error introduced by delays at the FL server, requiring no changes to IoT devices’ local training.

Key Idea

In our design, the FL server uses gradient inversion to approximate the local data distribution of a slow device from its delayed model update. It then mimics how the delayed update would have looked had it been computed using the current global model—ensuring that critical knowledge is preserved in aggregation.

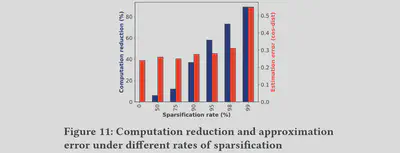

Improve FL Server Compute Efficiency and Privacy Protection

To further reduce the time cost from the computationally-expensive gradient inversion operation, we propose methods such as gradient sparsification and selective computation to only compute important gradients. As shown in the Figure below, involving the top 5% of gradients can reduce 55% of iterations in gradient inversion with only slight error increase.

On the other hand, previous works and our work finds out that sparsification can also reduce the capability of estimating the training data from the trained model, thus also achieving the goal of privacy protection.

Implementation and Experiments

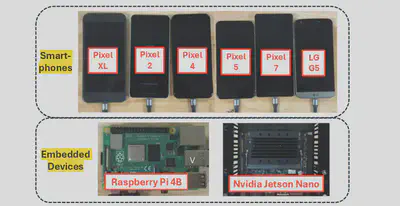

We implement our system on top of the Flower FL framework, using a combination of IoT devices, including embedded devices such as Raspberry Pi and NVIDIA Jetson Nano and smartphones of different models.

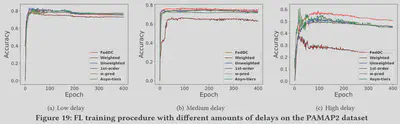

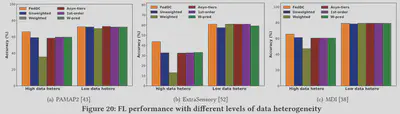

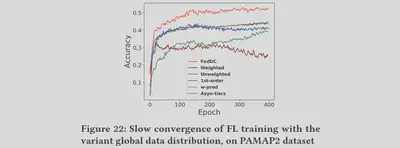

We evaluate the performance of FedDC over multiple AIoT datasets in different domains, such as PAMAP2 (human activity recognition), ExtraSensory (smartphone-based sensing) and MDI (disaster imagery classification). Compared to five different baselines, FedDC consistently outperformed alternatives under high device delay, data heterogeneity, and even in non-stationary data distributions.